The Week That Shifted Software Development

Three days. That's how long it took for the AI coding landscape to fundamentally change in February 2026.

On February 24, Cursor launched Cloud Agents — autonomous AI agents running in isolated Linux VMs that independently write code, run tests, and deliver merge-ready pull requests with video demonstrations. The same day, Anthropic shipped enterprise plugins for Claude Cowork — industry-specific AI agents for finance, HR, legal, and engineering. Three days later, OpenAI reported that Codex has 1.6 million weekly active users — tripling since the start of the year.

If you work in software development, you felt this. Not as an abstract vision, but as a concrete shift in daily work. At IJONIS, we use these tools every day — Cursor, Claude Code, custom agentic workflows. This article isn't a product comparison. It's an honest field report.

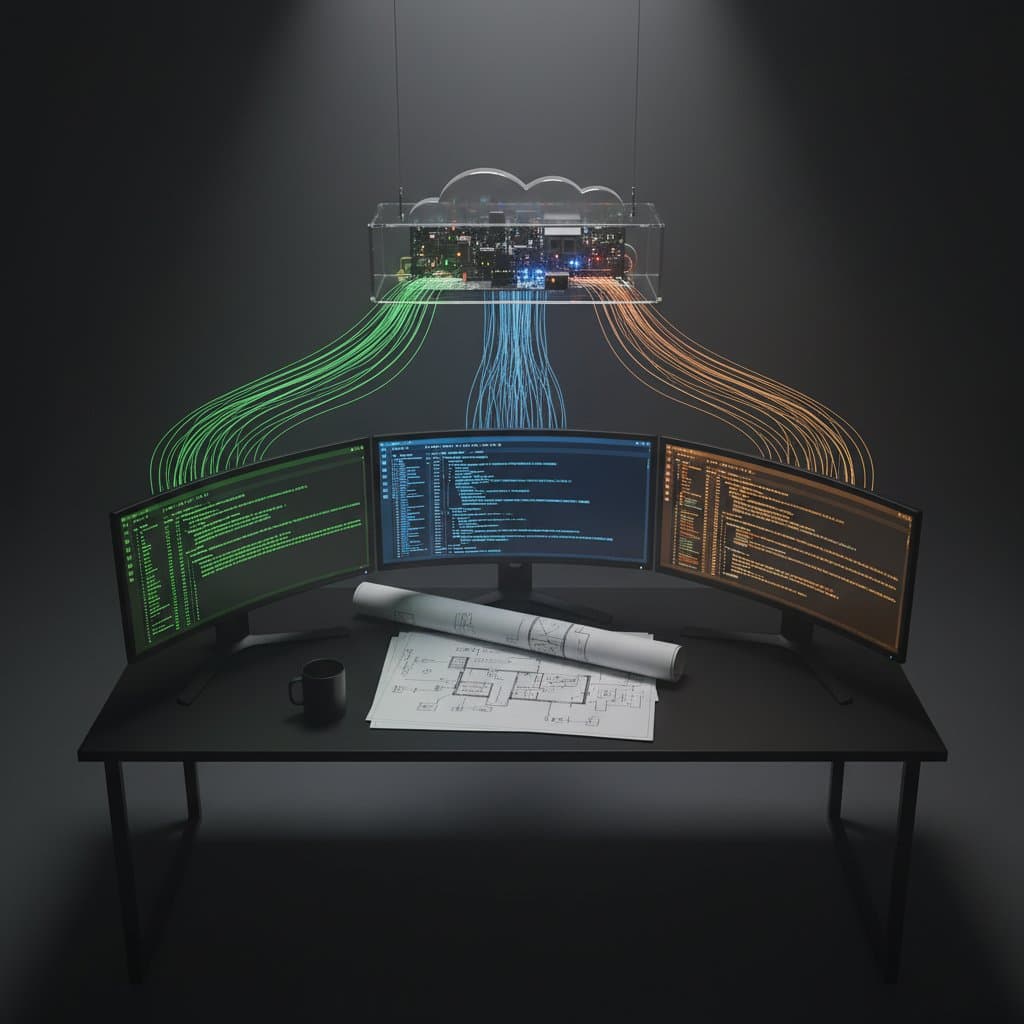

What Cursor Cloud Agents Actually Are

Cursor calls this the "third era" of AI-assisted coding. The evolution:

- Tab autocomplete — AI suggests the next line

- Synchronous agents — prompt-response loops in the editor

- Cloud Agents — autonomous agents working independently while you do something else

The technical core: each Cloud Agent gets its own isolated Linux VM. It reads your repository, writes code, runs tests, and delivers a finished pull request — complete with a video recording of its work, screenshots, and logs. Instead of reading a diff and mentally simulating whether it works, you watch a 30-second video of the agent demonstrating the feature.

This isn't a lab experiment. Cursor reports that 35% of their internal merged pull requests come from agents — production code shipping to millions of users.

What This Means in Practice

- 10–20 agents in parallel per user

- Access via web, desktop, mobile, Slack, and GitHub

- Remote desktop access to the agent's VM for manual testing

- Pricing: Starting at $20/month (Pro), $60 (Pro+), $200 (Ultra) — Cloud Agents available on all paid plans

Cursor vs. Claude Code vs. Codex — The Honest Comparison

Press releases tell one story. Daily work tells another. Here's what we actually see:

| App | Execution | Parallelism | Self-Testing | Artifacts | Core Strength |

|---|---|---|---|---|---|

| Cursor Cloud Agents | Cloud VMs | 10–20 agents | Yes, with video | Videos, screenshots, logs | Parallel execution |

| Claude Code | Local | Single session | Yes, no video | Diffs, conversation | Architecture & depth |

| OpenAI Codex | Cloud + local | Multiple projects | Yes | Diffs | Speed & scale |

| GitHub Copilot | Cloud | One workspace | Limited | Diffs | Platform integration |

Claude Code: The Quiet Market Leader

For a detailed head-to-head breakdown of Cursor vs Claude Code — including pricing, workflow examples, and when to use which — see our full Cursor vs Claude Code comparison.

What often gets lost in the coverage: measured by actual output, Claude Code is already the most impactful AI coding agent. According to the SemiAnalysis report "Claude Code is the Inflection Point", 4% of all public GitHub commits now come from Claude Code — projected to reach 20% by year-end.

Independent benchmarks show Claude Code uses 5.5x fewer tokens than Cursor for identical tasks. Fewer tokens mean lower costs and faster execution.

We use Claude Code daily through the Claude Agent SDK. Our open-source project GEO Lint — a linter with 92 rules for AI search visibility — was built almost entirely with Claude Code. Not as a demo. As production software published on npm and used by other teams.

OpenAI Codex: The Scale Machine

Codex bets on sheer distribution. The Mac app hit one million downloads in its first week. With the new GPT-5.3-Codex model, the system delivers over 1,000 tokens per second. Internally, 95% of OpenAI's engineers use Codex weekly — resulting in 70% more pull requests.

GitHub Copilot: From Product to Platform

The most interesting strategic shift: since February 2026, GitHub Copilot offers Claude and Codex as agents within Copilot. Copilot is becoming an orchestration layer — a meta-platform rather than a single tool. With 20 million users and 90% of the Fortune 100 as customers, that's a position none of the other tools can ignore.

From Vibe Coding to Agentic Coding — Where Are We Really?

Last year, we wrote about Vibe Coding — the idea that programming becomes a dialogue between human and AI. Describe what you need. The AI writes alongside you. Iterate.

Cursor Cloud Agents shift this paradigm. It's no longer about dialogue. It's about delegation. Development has gone through three phases:

- AI as autocomplete (2022–2023) — Copilot suggests the next line. Accept or reject.

- AI as junior developer (2024–2025) — Vibe Coding. Conversation, context, iteration. AI writes entire functions but needs constant guidance.

- AI as autonomous contributor (2026) — Cloud Agents. AI receives a ticket, works independently, delivers a finished PR with video proof.

Reality Check

We've arrived at phase 3 — but with a major caveat. Autonomous doesn't mean autonomous in the human sense. AI agents deliver reliably on well-defined, repeatable tasks. For tasks requiring judgment, contextual knowledge, or creative problem-solving, human intelligence remains irreplaceable.

What Works — And What Doesn't

After over a year of daily AI coding agent use, we have a clear picture:

Where AI Agents Excel

- Boilerplate code: Form validation, CRUD endpoints, database schemas — 70–80% time savings

- Refactoring: Renaming across 50 files, type conversion, API migration — work that took days, done in minutes

- Test generation: Unit tests, edge cases, test data — the agent writes 30 tests before a human has formulated the third

- Code exploration: "How does authentication work in this project?" — the agent reads the codebase faster than any documentation

- Prototyping: From idea to working MVP in hours instead of weeks

Where AI Agents Fail

- Architecture decisions: "Should we build a monolith or microservices?" — AI delivers plausible answers for both options. The right choice requires business context no prompt can convey.

- Novel problem-solving: When the problem has never been solved before, AI hallucinates solutions that are syntactically correct but semantically wrong.

- Security-critical code: Authentication, cryptography, permission systems — "almost right" is worse than obviously wrong.

- Understanding legacy systems: 15 years of accumulated codebase with unwritten rules. The agent sees the code but not the history behind it.

- Domain logic: Business rules, regulatory requirements, industry-specific constraints — the agent doesn't know commercial law.

The 80/20 Reality

"I can't write a single line of code by hand — yet I've shipped multiple SaaS products in nine months. That's possible because AI handles the routine work while I focus on architecture and business logic." — Jamin Mahmood-Wiebe, Founder of IJONIS

This is the most honest summary we can give: AI agents handle 80% of the routine. But the remaining 20% — architecture, quality judgment, security, domain knowledge — doesn't become less important. It becomes ten times more valuable. Because it's the bottleneck.

What Changes for Development Teams

The consequences go beyond tools. Working with AI coding agents requires adjusting roles, processes, and evaluation criteria.

Code Review Becomes More Important, Not Less

When an agent delivers 20 pull requests per day, review becomes the bottleneck. This isn't a side task — it's the central quality control. Teams need review expertise, not more manual coders.

The Stack Overflow Developer Survey 2025 confirms this tension: 84% of developers use AI tools, but only 3% trust the output unconditionally. 66% report frustration with AI solutions that are "almost right, but not quite."

"The future of AI is about orchestration of tokens, not just selling tokens at base cost." — SemiAnalysis, Claude Code is the Inflection Point

Architecture Skills Become Premium

When code becomes a commodity, architecture becomes the differentiator. Those who can design systems — data models, API boundaries, security architectures, scaling patterns — become more valuable than ever. Those who only write code become substitutable by agents.

This is my personal experience: I can't write a single line of code by hand. Yet I've shipped multiple SaaS products and agentic workflows in nine months. Not because I can code, but because I think in systems, architecture, and constraints. Code is a commodity. Architecture is not.

Prompt Engineering Becomes a Core Skill

"Teams that invest in review expertise and architecture skills today will ship five times faster in six months than those ignoring AI agents. This isn't hype — it's measurable reality." — Jamin Mahmood-Wiebe, Founder of IJONIS

The quality of agent output depends directly on the quality of instructions. CLAUDE.md files, structured prompts, context engineering — these aren't nice-to-haves. They're the levers that determine whether an agent produces usable or unusable code.

New Metrics for AI-Augmented Teams

Old metric: Lines of code per developer. New metric: Quality of architecture decisions. Number of security vulnerabilities prevented. Speed of iteration. Teams that understand this ship 5× faster than teams ignoring AI agents.

What Businesses Should Ask Their Development Partners

If you're hiring external development teams, ask:

- Which AI coding tools do you use daily? No answer is a red flag. Listing tools without concrete workflows is too.

- What does your review process for AI-generated code look like? "We review everything" is the right answer. "AI doesn't make mistakes" is disqualifying.

- Show me a real project built with AI agents. Experience beats theory.

- How do you ensure security in AI-generated code? The answer should be specific — not "we pay attention."

IJONIS in Practice

We use Claude Code, Cursor, and custom agentic workflows daily — from our Hamburg office and remotely. From our open-source linter GEO Lint to complex SaaS products. This isn't a marketing claim — it's verifiable reality on GitHub.

The Valuation Landscape — Numbers That Show the Momentum

Investment figures reveal the strategic importance of this market:

| App | Valuation | Latest Round | Revenue (ARR) |

|---|---|---|---|

| OpenAI | $840B | $110B | $29.4B (2026 target) |

| Anthropic | $380B | $30B | $14B |

| Cursor | $29.3B | $2.3B | $1B+ |

Cursor hit $1 billion ARR in under 24 months — the fastest SaaS growth ever recorded. With roughly 300 employees, that's $3.3 million in revenue per person. These numbers aren't accidental. They show development teams worldwide are willing to pay for AI coding tools because the productivity gains are real.

The "SaaSpocalypse" — Why Software Stocks Are Crashing

A side effect affecting the entire software industry: since Anthropic introduced Claude Cowork on January 30, 2026, SaaS companies have lost $285 billion in market capitalization. Intuit: -33%. Thomson Reuters: -31%. ServiceNow: -23%. Salesforce: -22%.

The logic: if AI agents can do the work of 100 employees, a company doesn't need 100 SaaS licenses anymore. Foundation model providers — Anthropic, OpenAI — are now competing directly with the application-layer software built on top of them.

For development teams, this means: the tools you build software with are also changing the software your clients buy. Understanding this dynamic is how you build the right products.

What Do AI Coding Agents Mean for Your Next Project?

Cursor Cloud Agents, Claude Code, and OpenAI Codex aren't future concepts. They're tools that work today, with clear strengths and equally clear limitations.

Development teams using these tools ship faster. Those that don't fall behind. But "using" doesn't mean "blindly trusting." It means: mastering architecture, reviewing code, ensuring quality — and delegating the 80% of routine work that doesn't require human creativity.

Frequently Asked Questions

What are Cursor Cloud Agents?

Cursor Cloud Agents are autonomous AI coding agents running in isolated Linux VMs in the cloud. They read your repository, write code, run tests, and deliver merge-ready pull requests with video demos, screenshots, and logs. Each user can run 10–20 agents simultaneously.

How much do Cursor Cloud Agents cost?

Cloud Agents are available on all Cursor paid plans: Pro ($20/month), Pro+ ($60/month), and Ultra ($200/month). The difference is the credit pool — more credits allow longer and more parallel agent sessions.

What's the difference between Cursor and Claude Code?

Cursor Cloud Agents run parallel cloud VMs with video verification — ideal for many simultaneous, well-defined tasks. Claude Code works locally and excels at deep context understanding and token efficiency — ideal for architecture work and complex feature development. In daily use, both tools complement each other.

Will AI replace software developers?

No — but the role is changing. AI agents handle routine work (boilerplate, tests, refactoring). Architecture, security, domain logic, and quality judgment remain core human competencies. Teams using AI agents deliver faster — but they need experienced developers for review and architecture.

Is AI-generated code secure?

AI-generated code requires the same (or stricter) review process as manually written code. Especially for authentication, cryptography, and permission systems, AI code should be reviewed by specialists. Tools like Claude Code deliver diffs that can be systematically reviewed — but blind trust is a security risk.

What's the difference between Vibe Coding and Agentic Coding?

Vibe Coding describes the paradigm of dialogue-based programming — human and AI work in alternation. Agentic Coding goes further: AI works autonomously on tasks without constant human guidance and independently delivers finished results. Cursor Cloud Agents are the most consistent implementation of this concept.